Simple and Scalable Nearest Neighbor Machine Translation

Published in ICLR, 2023

Yuhan Dai*, Zhirui Zhang*, Qiuzhi Liu, Qu Cui, Weihua Li, Yichao Du, Tong Xu

Abstract

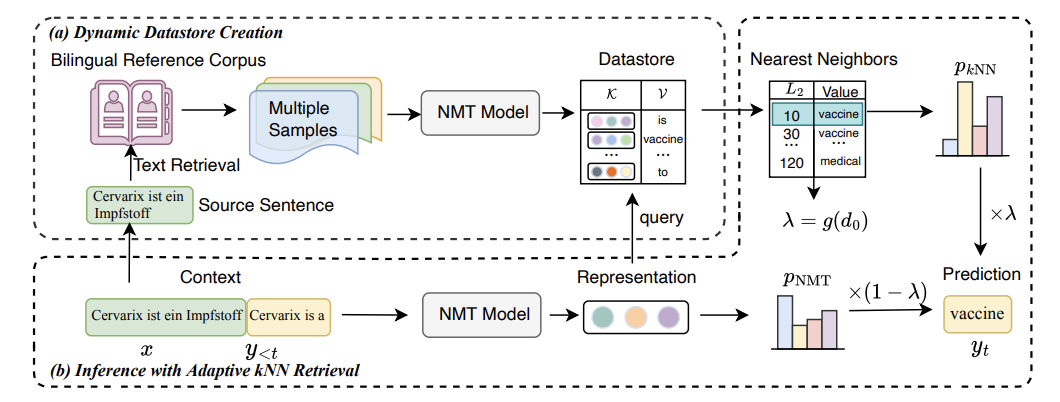

$k$NN-MT is a straightforward yet powerful approach for fast domain adaptation, which directly plugs pre-trained neural machine translation (NMT) models with domain-specific token-level $k$-nearest-neighbor ($k$NN) retrieval to achieve domain adaptation without retraining. Despite being conceptually attractive, $k$NN-MT is burdened with massive storage requirements and high computational complexity since it conducts nearest neighbor searches over the entire reference corpus. In this paper, we propose a simple and scalable nearest neighbor machine translation framework to drastically promote the decoding and storage efficiency of $k$NN-based models while maintaining the translation performance. To this end, we dynamically construct an extremely small datastore for each input via sentence-level retrieval to avoid searching the entire datastore in vanilla $k$NN-MT, based on which we further introduce a distance-aware adapter to adaptively incorporate the $k$NN retrieval results into the pre-trained NMT models. Experiments on machine translation in two general settings, static domain adaptation and online learning, demonstrate that our proposed approach not only achieves almost 90% speed as the NMT model without performance degradation, but also significantly reduces the storage requirements of $k$NN-MT.

Architecture

Recommanded Citation

@article{dai2023simple,

title={Simple and Scalable Nearest Neighbor Machine Translation},

author={Dai, Yuhan and Zhang, Zhirui and Liu, Qiuzhi and Cui, Qu and Li, Weihua and Du, Yichao and Xu, Tong},

journal={arXiv preprint arXiv:2302.12188},

year={2023}

}